X-Ray: A Sequential 3D Representation for Generation

Tao Hu1, Wenhang Ge2*, Yuyang Zhao1*, Gim Hee Lee1

1 National University of Singapore 2 HKUST(GZ)

NeurIPS 2024, Spotlight

* These authors contributed equally to this work.

Abstract

We introduce X-Ray, a novel 3D sequential representation inspired by the penetrability of x-ray scans. X-Ray transforms a 3D object into a series of surface frames at different layers, making it suitable for generating 3D models from images. Our method utilizes ray casting from the camera center to capture geometric and textured details, including depth, normal, and color, across all intersected surfaces. This process efficiently condenses the whole 3D object into a multi-frame video format, motivating the utilize of a network architecture similar to those in video diffusion models. This design ensures an efficient 3D representation by focusing solely on surface information. We demonstrate the practicality and adaptability of our X-Ray representation by synthesizing the complete visible and hidden surfaces of a 3D object from a single input image, which paves the way for new 3D representation research and practical applications.

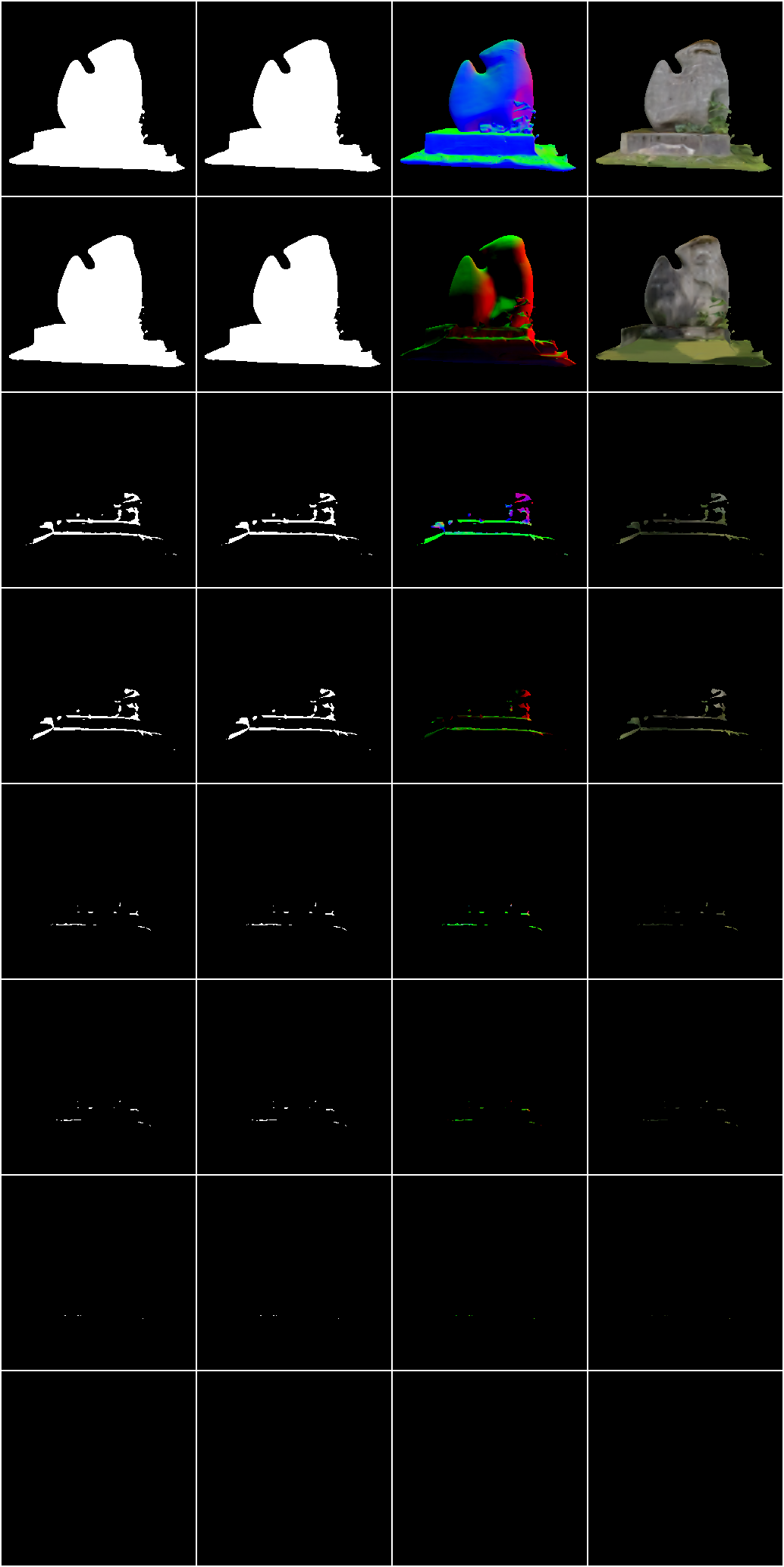

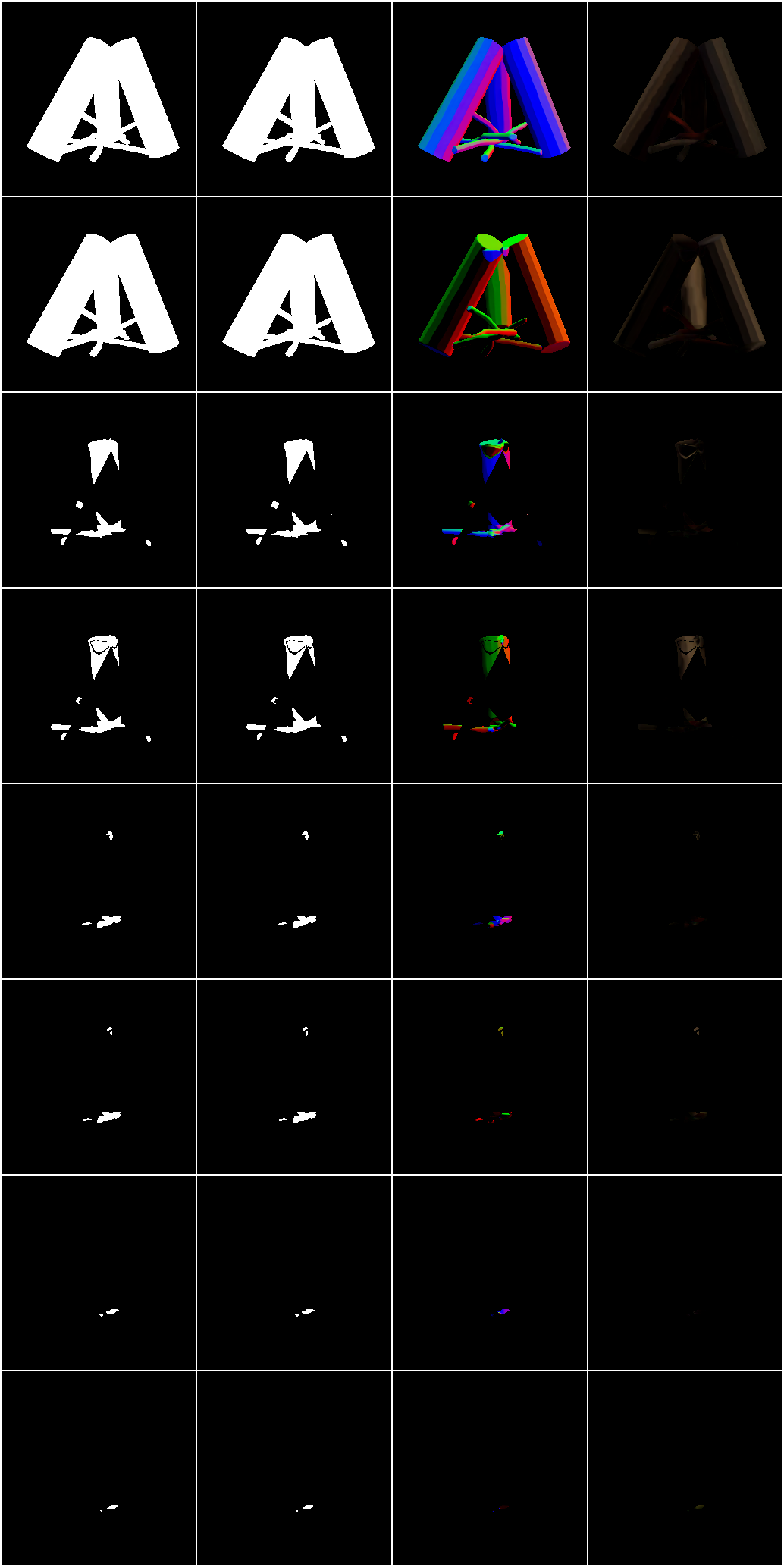

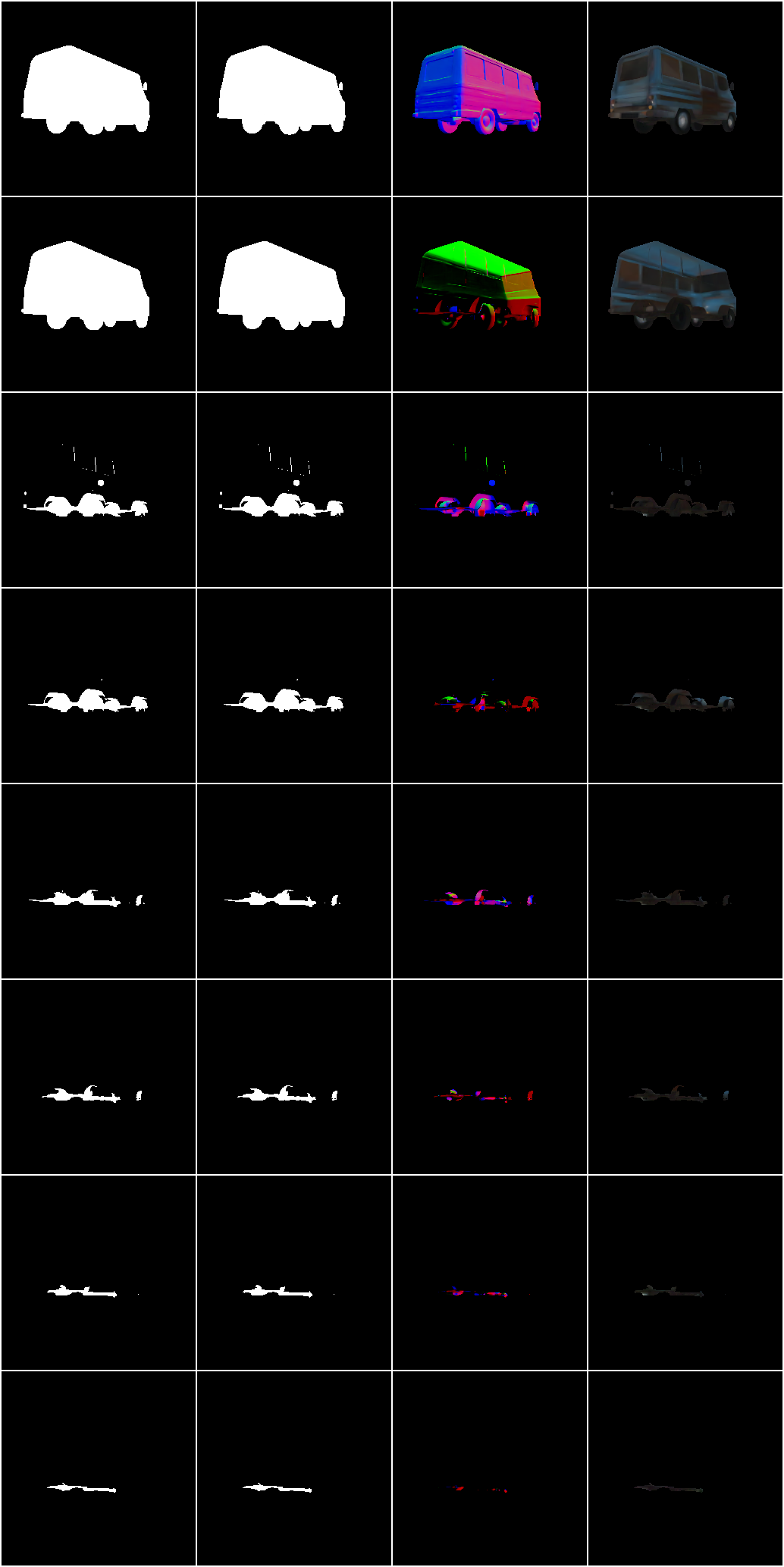

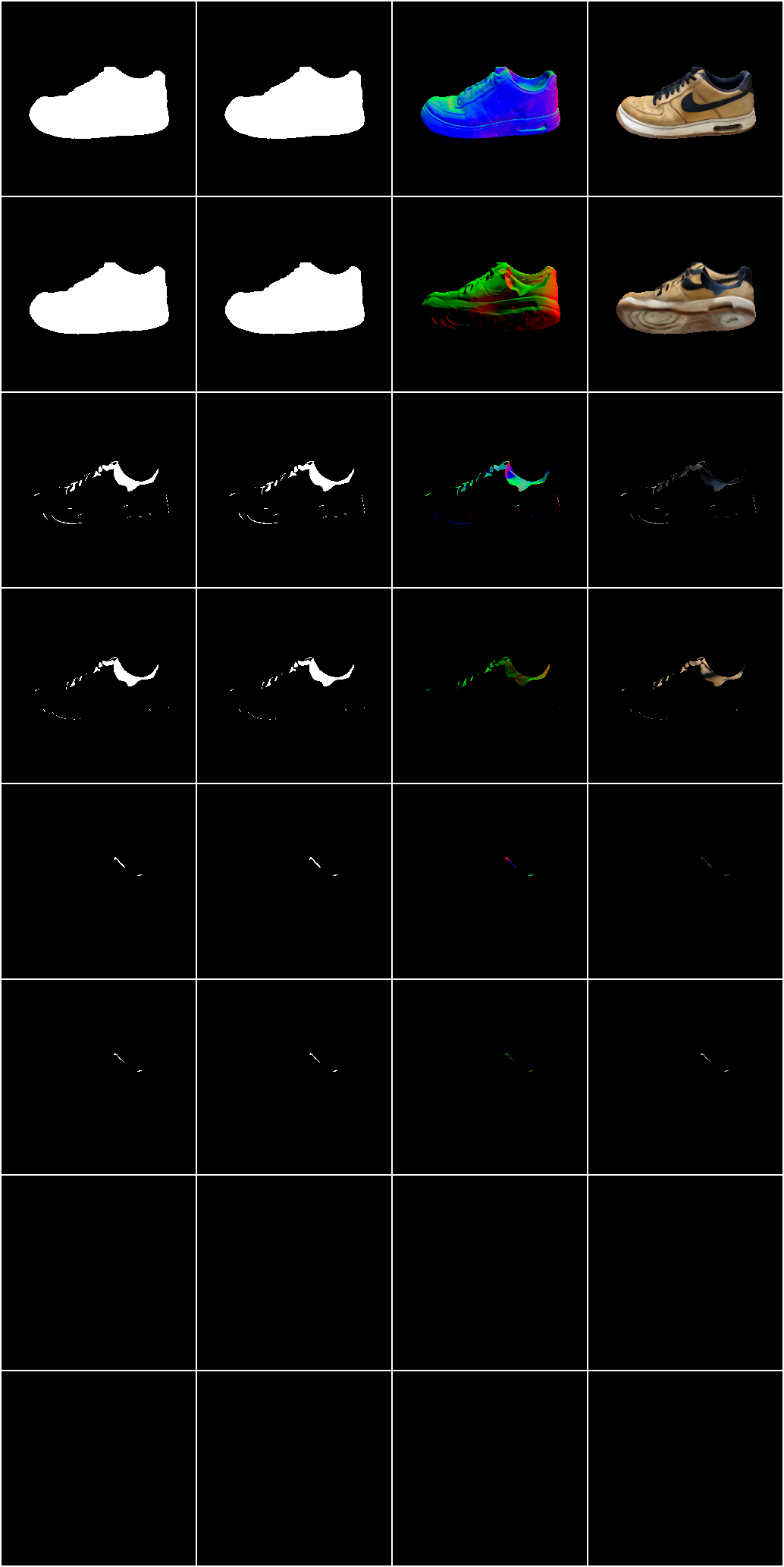

Samples of X-Ray

Fig. 1. Samples of our proposed X-Ray Representation. The first row displays the raw 3D objects to be converted. The second row illustrates the images rendered from random camera perspectives. Subsequent rows reveal the hit H, depth D, normal N, and color C Maps of X-Ray from the 1st to the 8th layer. It is important to note that the number of layers in an arbitrary X-Ray is not fixed, indicating various complexity of 3D objects.

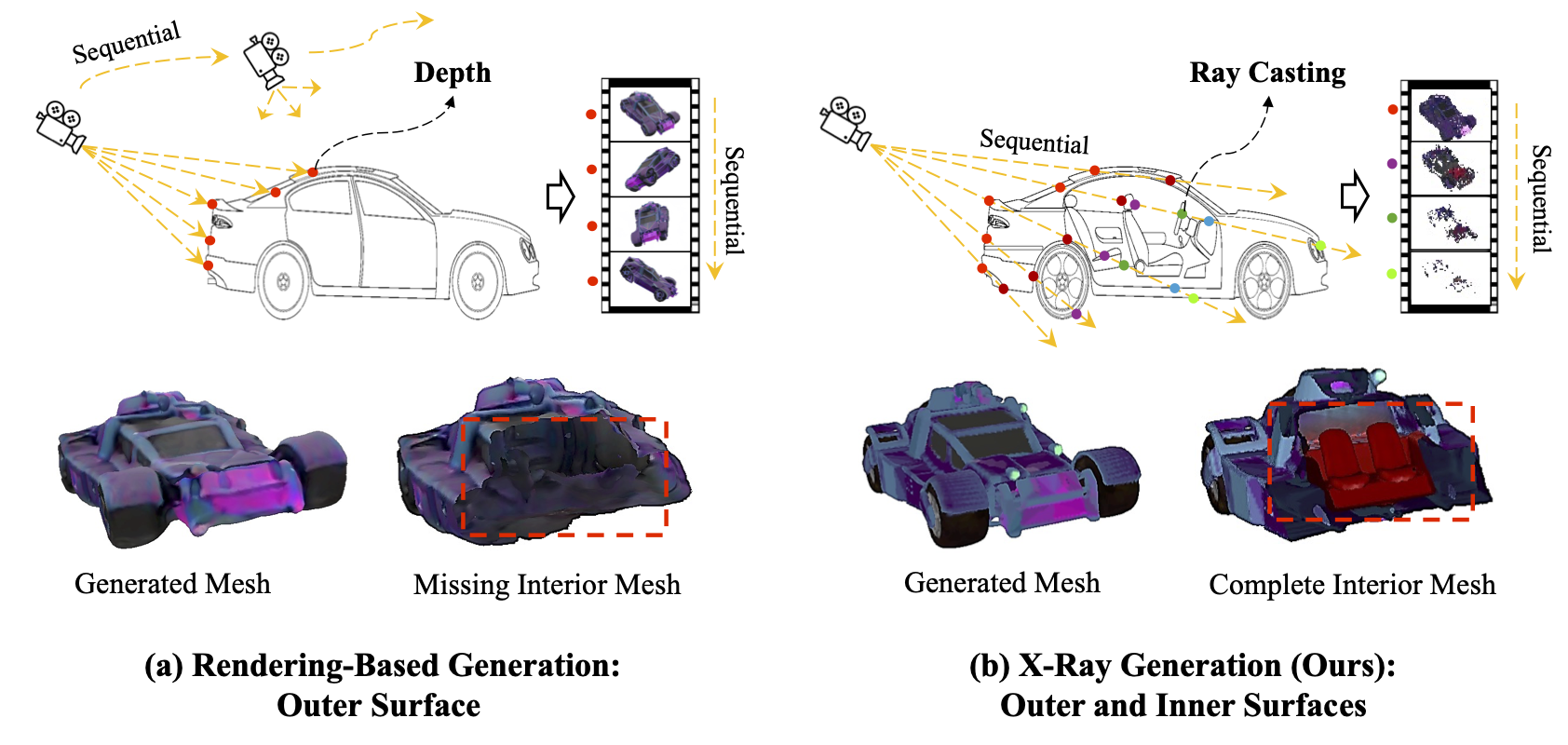

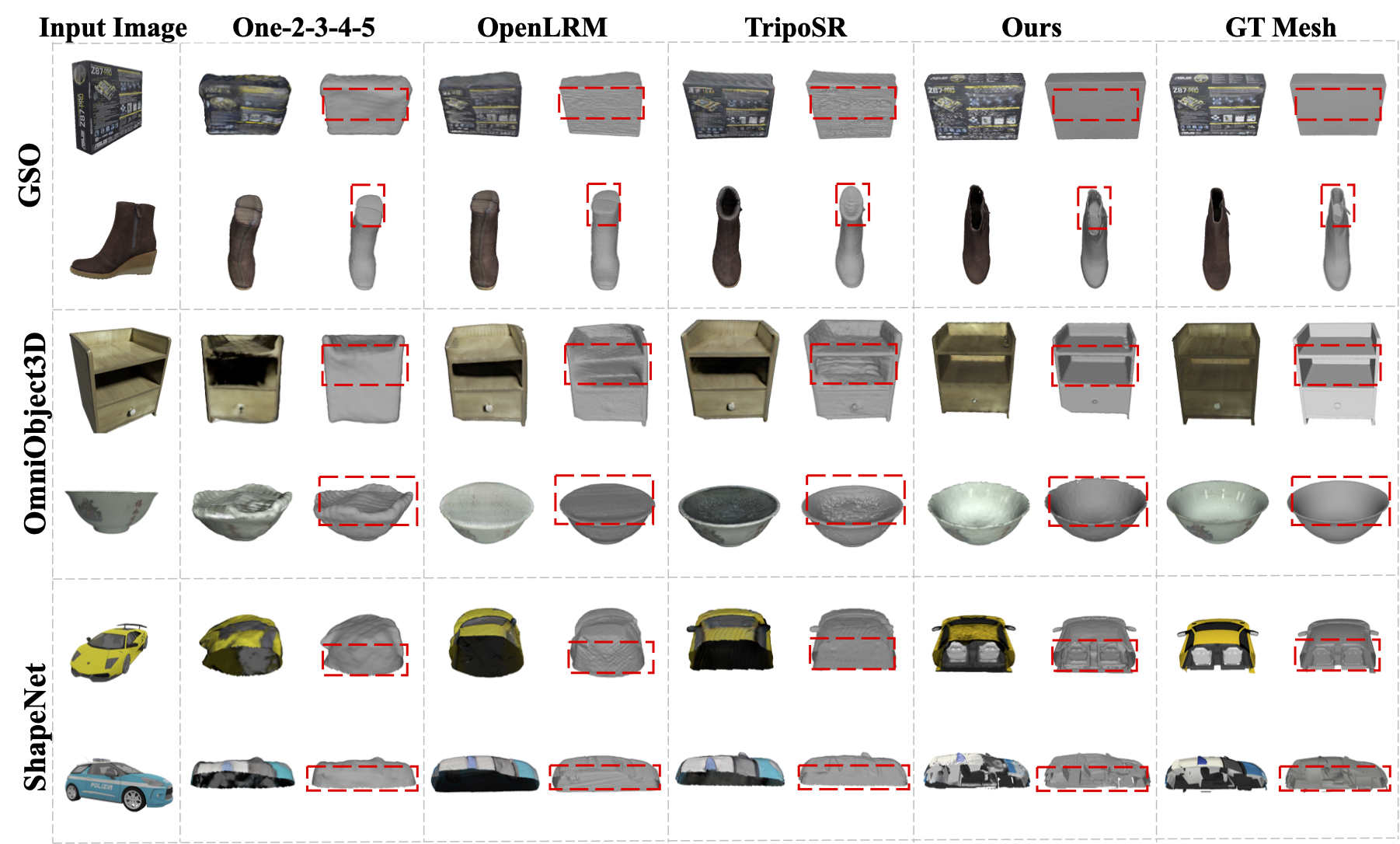

X-Ray (Ours) VS. Rendering-based Generation

Input Image

TripoSR

X-Ray(Ours)

Fig. 2. Comparison between our the proposed X-Ray with the rendering-based 3D Generation. The former usually focus on the visible surface within camera view, while ours can sense all the visible and invisible surfaces thus can generate 3D object with both outside and inside shape and appearance.

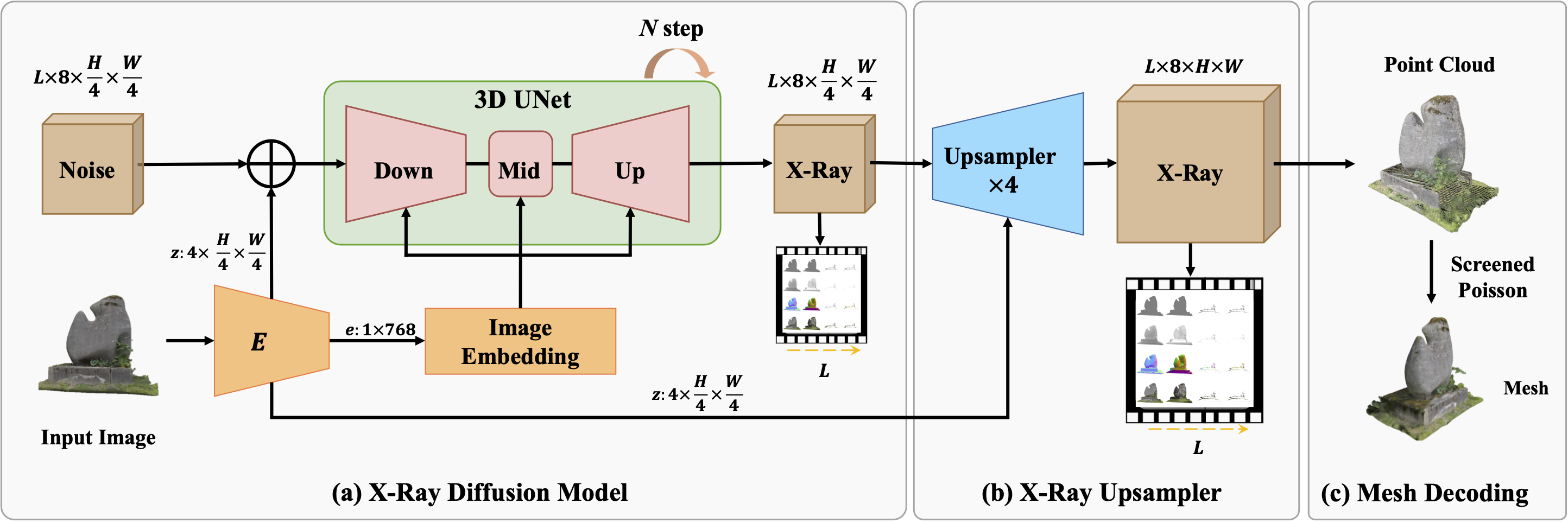

Generation Pipeline of X-Ray

Fig. 3. Overview of the 3D Generator for our proposed X-Ray. In the first stage, the diffusion model synthesizes a low-resolution X-Ray from random noise with text or image as condition. During the second stage, we adopt a Spatial-Temporal Upsampler to generate high-quality X-Ray. Finally, X-Ray can be directly converted to 3D Mesh via the decoding process.

Image-to-3D

Input Image

Synthesized X-Ray

Encoded Point Cloud

Decoded Mesh

Text-to-3D

Input Prompt

Synthesized X-Ray

Encoded Point Cloud

Decoded Mesh

power supply"

Citation

@article{hu2024x,

title={X-ray: A sequential 3d representation for generation},

author={Hu, Tao and Ge, Wenhang and Zhao, Yuyang and Lee, Gim Hee},

journal={Advances in Neural Information Processing Systems},

volume={37},

pages={136193--136219},

year={2024}

}